AI Accessibility Companion for 3.7 Billion People

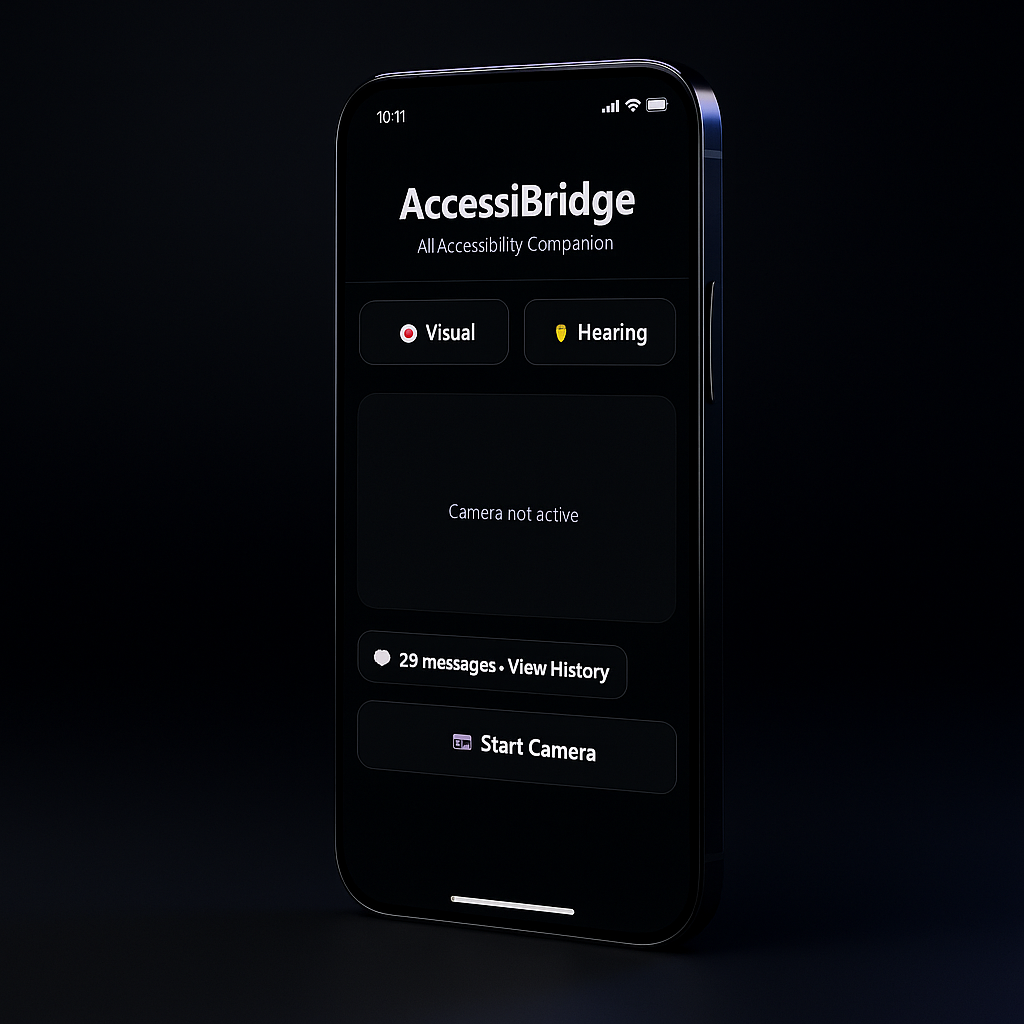

AccessiBridge

Identifying the need

In 2025, I came across a statistic that stopped me: 3.7 billion people worldwide have visual or hearing impairments. Nearly half the global population — 2.2 billion with visual impairments, 1.5 billion experiencing hearing loss. They navigate a world built without them in mind.

Around me, I watched people struggle with basic daily tasks. Visually impaired people couldn't read restaurant menus. Deaf users missed doorbells, fire alarms, and important environmental sounds. The "solutions" were fragmented — five different apps for five different needs, most expensive or poorly designed.

So I built what I needed: an AI companion that's affordable, unified, and actually serves the communities that need it most. This isn't just an app. It's accessibility infrastructure built for people who've been left out of every other solution.

Mapping out the solution

The Gemini 3 Global Hackathon gave me a hard deadline and creative constraint — under 24 hours to build something that worked.

I broke down the problem into two core user groups. Visually impaired users need environmental awareness — what's in front of them, what text says, whether it's safe to move forward. Deaf and hard-of-hearing users need auditory awareness — what sounds are happening, what people are saying, what alerts they might be missing.

From there I designed the architecture: dual-mode interface (Visual + Hearing), real-time AI analysis with Gemini 2.5 Flash, browser-based speech and audio processing, and black glass morphism UI for high contrast and accessibility. When sound detection didn't work initially, I pivoted from AI-based classification to volume-based detection — because working beats perfect when you have 2 hours until deadline.

The Product

For blind and low vision users: AI-powered scene descriptions with spatial awareness, text reading (OCR) for documents, signs, menus, navigation safety assessments with obstacle detection, hands-free voice control with natural conversation, and conversation memory for context-aware follow-ups.

For deaf and hard of hearing users: real-time speech-to-text live captions, environmental sound detection with visual alerts, screen flash notifications for urgent sounds, haptic vibration feedback, and exportable transcripts and sound logs.

AccessiBridge breaks down the barriers between technology and accessibility, giving 3.7 billion people worldwide a unified tool to navigate their environment with independence and confidence.

Final Product

Tech Stack

What I Learned

Key Takeaways

Building under pressure reveals what matters

With a hard 3:00 AM deadline, I learned to prioritize ruthlessly. Features that 'would be nice' got cut. The result? A cleaner, more focused product than if I'd had weeks.

Accessibility isn't optional — it's foundational

Designing for 3.7 billion people forced me to think differently about UI/UX. High contrast, voice control, visual alerts — these are core features, not add-ons.

Pivot fast when tech doesn't cooperate

The Gemini audio classification API didn't exist in the version I expected. Instead of panicking at 11 PM, I pivoted to volume-based detection in 30 minutes.

Real users need real solutions, not demos

I didn't build AccessiBridge as a hackathon demo that looks good in a video. I built it to actually work — deployed, fully functional, ready to use today.

AI amplifies empathy, but humans drive purpose

Gemini 2.5 Flash powers the intelligence, but the purpose came from deeply understanding who I was building for. AI is a tool for impact, not a replacement for empathy.

Next step

See more of my work