AI-Powered Mental Health for South Africa

MentalMosaic

Identifying the need

During my first month at the OGCTA learnership, I found myself surrounded by students and young professionals who were struggling in silence. South Africa's mental health crisis is real — 1 in 5 South Africans suffer from a mental health disorder, but over 75% never receive treatment. The barriers? Stigma, cost, and access.

I was attending the Geekulcha × Microsoft AI for Social Good Hackathon when the brief landed: build something that creates social good. The question I kept returning to was — how do you make mental health support available to someone who can't afford therapy, doesn't have transport to a clinic, or who's too embarrassed to ask for help?

The answer had to fit in their pocket. It had to be free. It had to feel safe. And it had to understand South African culture — not give generic "call your therapist" advice. That became MentalMosaic.

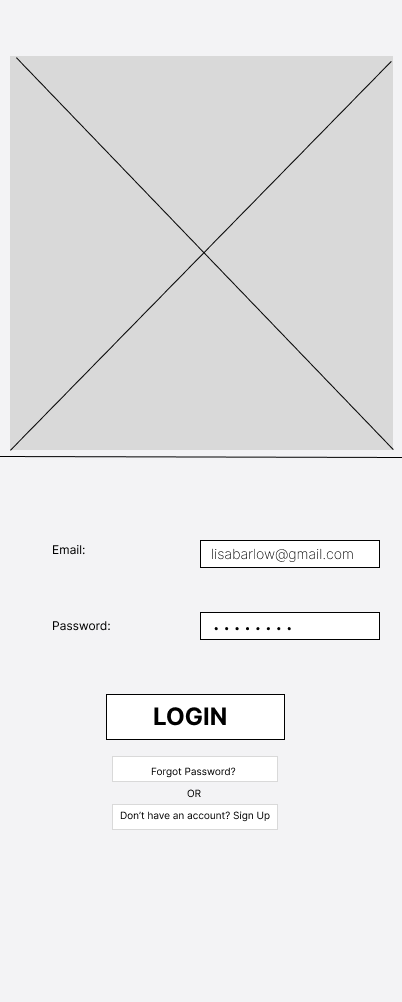

Campaign Ad

MentalMosaic — The Ad

A short-form campaign ad created to bring MentalMosaic to life and communicate its mission.

Mapping out the solution

I started by mapping the landscape. Existing mental health apps — Headspace, BetterHelp, Woebot — were either expensive, not localised, or not AI-powered enough. None of them felt like they were built for someone in Soweto or Pretoria North.

I identified three core pillars: an empathetic AI agent that acknowledges cultural context, mood tracking with journaling, and community connection for peer support.

The technical decision was Microsoft Copilot Studio. The hackathon was powered by Microsoft, so I built the AI agent using Copilot Studio's workflow engine — creating conversation flows that felt natural, empathetic, and culturally aware. Design philosophy: calming colours, no clinical feel, language that sounds like a friend — not a medical professional.

The Product

MentalMosaic is a comprehensive mental health platform with four core features: an AI companion for daily check-ins and conversations, mood tracking with historical visualisation, a journaling space with AI-powered reflection prompts, and community features for peer support.

The AI agent was built to recognise when someone needs crisis resources (real SA crisis lines) versus when they just need to vent. The cultural sensitivity was built into the conversation flows from day one, not bolted on after.

Final Product

Tech Stack

What I Learned

Key Takeaways

Crisis handling matters most

Building for mental health means you must handle crisis moments gracefully — the AI needed clear, compassionate pathways to real crisis lines.

Cultural context is non-negotiable

For South African users, the difference between helpful and harmful is understanding the cultural context around mental health and stigma.

MVP constraints force clarity

With limited hackathon time, I focused entirely on AI agent quality above all else — which turned out to be exactly the right call.

Next step

See more of my work